Abe Wandersman and Lawrence M. Scheier are co-editing a special double issue on Strengthening the Science and Practice of Implementation Support: Evaluating the Effectiveness of Training and Technical Assistance Centers. The special issue will be published in the first half of 2024. Click on the following title to review this preliminary draft: Strengthening the Science and Practice of Implementation Support: Evaluating the Effectiveness of Training and Technical Assistance Centers. Contributed by founder Abe Wandersman

0 Comments

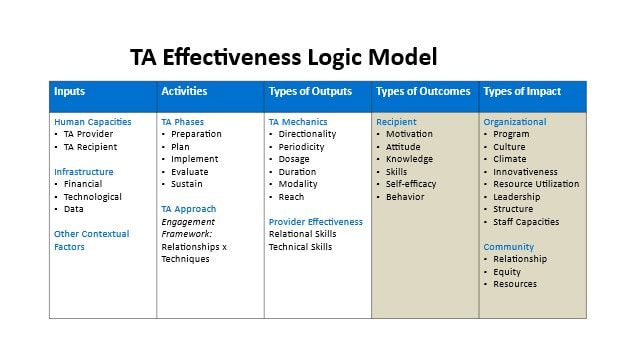

In 2022, we and our colleagues published the journal article: A Scoping Review of the Evaluation and Effectiveness of Technical Assistance in Implementation Science Communications, which summarizes the state of the science of technical assistance (TA) evaluation and effectiveness. We found that much of the TA that is conducted is reactive/responsive, experiential, without a systematic approach, and output-oriented (e.g., number of meetings, length of meetings, and topics discussed). The expected outcomes are often not stated—or perhaps even thought about. At other times, expected outcomes are overly ambitious, given the real-life challenges on the ground, such as the limited ability of TA to directly influence the actual implementation of the recipient’s learning. Therefore, we developed a TA Effectiveness Logic Model to help explicate logically what might be expected from different inputs and activities of TA. The TA Effectiveness Logic Model that we use in our practice is a skeletal frame to guide TA planning, implementation, and evaluation. This logic model illustrates a theory of change for a set of TA activities using the domains of inputs, processes, outputs, and outcomes. Moreover, the TA Effectiveness Logic Model may be a valuable tool for developing reporting standards. We have used versions of the logic model in a number of projects that have included a large training and TA center funded by the U.S. Department of Health and Human Service’s Assistant Secretary for Planning and Evaluation to educate healthcare organizations about communicable diseases and CADCA (Community Anti-Drug Coalitions of America), a large training and TA center that works with hundreds of community coalitions for substance abuse prevention. The figure below illustrates an example of this TA Effectiveness Logic Model. (For your convenience, the article title above is a hyperlink that will forward to the scoping review.) Contributed by Victoria Scott and founder Abraham Wandersman Suggested citation: Wandersman, A. and Scott, V. A (2022). Technical Assistance (TA) Effectiveness Logic Model, www.wandersmancenter.org.

Many of the solutions to our major social problems (e.g., homelessness, drug overdoses, children with mental health problems and declining test scores, social isolation of the elderly) are like a jigsaw puzzle with pieces that don’t fit together, and we may have missing pieces and don’t even know it. I recently was in a session with the Executive branch’s Office of National Drug Control Policy (ONDCP), also known as the Drug Czar’s Office, and shared the attached PowerPoint presentation. While the slides are primarily about substance abuse prevention (and treatment), the concept illustrated in slides 4 and 5 appears to be a very good metaphor that applies to many of our social problems including homelessness in that our solutions are like a jigsaw puzzle with pieces that don’t necessarily fit together, and we may have missing pieces and don’t even know it. If it is important to be evidence-based in our interventions, isn’t it also important to be evidence-based in how we provide support via tools, training, technical assistance, and quality assurance/quality improvement? My colleagues and I have asked this question for over 20 years. Ten years ago, Victoria Chien Scott and Jason Katz and I published an article called Toward an Evidence-Based System for Innovation Support for Implementing Innovations with Quality: Tools, Training, Technical Assistance, and Quality Assurance/Quality Improvement in the American Journal of Community Psychology. We discussed the importance of developing evidence-based approaches to the four major types of support (tools including websites, training, TA, and QA/QI). Since that time, we have worked on furthering the science and practice of support with a focus on TA. One of our major activities has been to systematically review the literature on TA and point to major implications for the science and practice of TA. In 2016, Jason Katz and I published Technical Assistance to Enhance Prevention Capacity: A Research Synthesis of the Evidence Base in Prevention Science. Now, in 2022, Victoria Scott, Zara Jillani, Adele Malpert, Jennifer Kolodny-Goetz, and I have published A Scoping Review of the Evaluation and Effectiveness of Technical Assistance in Implementation Science Communications. This new systematic scoping review of two decades of the scientific literature plainly reveals the state of the science of technical assistance and has many implications for improving both the science and practice of TA, particularly in the context of evaluating TA. If we want to help improve the world of intervention supports, then funders, researchers/evaluators, support personnel such as TA providers, and other key stakeholders must help grow and use the evidence of effective support. Contributed by founder Abe Wandersman

The onset of the COVID-19 pandemic in early 2020 hit schools hard. School closures and social distancing mandates resulted in a dramatic change, literally overnight, in the way that schools provided students with an education. Since then, the list of questions on the minds of school personnel as well as students and their families has certainly grown well beyond the concern about what period math will be. Questions such as these might come to mind: “Will we start the school year with all teachers and students in the physical classroom on the school campus? If so, will we need to switch to a virtual platform at some point in the year? Will that switch be at a moment’s notice like it was before?” And, even such thoughts as these: “Will there even be a prom or graduation ceremony this year?” or “I wonder what happened to that student whose family I wasn’t able to connect with during the pandemic. I hope they are okay.”  I know that many of you are interested in community transformation. Therefore, you might be interested in our work on developing a Community Transformation Map. Below is a blog post from the Institute for Healthcare Improvement’s website. Editor's note: This piece was originally published by the Institute for Healthcare Improvement on August 3rd and has been reposted here with the author's permission. Community-level change is likely to be our best bet for chipping away at pervasive health inequities, yet communities are each nestled inside local, regional, and national policies, attitudes, and expectations. Can such diverse ecosystems be changed? Yes, but it requires more than change. It requires a transformation. To meet this challenge, the Spreading Community Accelerators through Learning and Evaluation (SCALE) initiative from the Institute for Health Improvement — in partnership with Communities Joined in Action, Community Solutions, and Network for Regional Healthcare Improvement — developed a community transformation roadmap for achieving a culture of health. The development, use, and evaluation of this roadmap is described in the American Journal of Orthopsychiatry and summarized below. Sexual assault is a serious problem on college and university campuses. A new step-by-step guide can help university leadership understand how their efforts align with best practices in prevention and what support must be provided to prevention staff to ensure high-quality implementation.

Colleges and universities in the United States have the responsibility for ensuring the on-campus safety of almost 20 million students. One area of student safety and wellness that has received increased attention in recent years has been campus-based sexual assault prevention. While the federal government and researchers have both been pursuing new strategies for prevention and response to sexual assault and harassment, evidence suggests that these efforts have not yet been successful. As many as 25% of college students have reported being sexually assaulted or harassed – a large number given the range of negative impacts sexual assault and harassment can have on survivors (including an increased risk of PTSD, depression, anxiety, and substance abuse). Click the "Read More" button below to read the rest of the blog post.

The second webinar, which focuses on readiness in healthcare for chronic disease as a result of COVID-19, will be held on Thursday, November 19 from 2:00-3:00pm EST (link to register forthcoming, tentative title: “Cancer, Heart Disease, Diabetes, and Chronic Diseases Have Not Gone Away Because of COVID: How Can We be Ready to Optimize Chronic Condition Care?). If you have any questions or would like more information about the webinars, please email Brittany Cook at [email protected].

As evaluators, we believe in turning the evaluative lens.

We believe in accountability. We believe in transparency. And we believe in admitting when we were wrong.  Our first international webinar was a success! With our friends and colleagues Julia Moore and Sobia Khan from the Center for Implementation, we delivered a fully virtual and interactive two hour event on Readiness and Implementation Science. We were so excited to have participants from North America, Australia and Africa join us. Not only did we get to present and train others on Readiness, but we got to hear from almost 120 people what their thoughts were and how they might apply it to their own work. One major goal of the Wandersman Center is to spread our ideas and readiness framework in a way that inspires action and helps organizations, and we're excited and grateful for this opportunity to do so. Look out for more trainings or tools from us, and if you're interested in hearing more about this webinar, please email us at [email protected].

Randy brings to The Wandersman Center his strong experience as a public health practitioner, thus grounding the reality of implementation in real world situations. He has a long history of linking public health practice with academic and research partners to advance translation, dissemination and quality implementation. Randy’s participation with the work of the Wandersman Center will help bring that lens of real world public health practice to our work.

“Before the Coronavirus Outbreak, A Cascade of Warnings Went Unheeded, Government Exercises, including a pandemic simulation last year, made it clear that the U.S. was not ready for a crisis like the coronavirus”

Re: “Halting Virus will Require Harsh Steps, Expert Says: Near Total Cooperation from Public is Key to Isolating Clusters of Infection.” (NYT, March 23, 2020). There is Good News. China has turned the curve on the coronavirus (no new cases as of 3/19/20); South Korea, Singapore, and Hong Kong internationally are containing the virus….but America is not ready. There are things that these countries are doing that appear to work (called evidence-based practices). Why can’t we do the same thing? Are we ready to adapt these methods to this country? Of course, it will take hard work and “near total cooperation from public to stay 6 feet apart from everyone else, work from home (or not at all) for weeks or months, have no meetings, parties, not to go to gyms, sporting events, concerts, bars, or restaurants, maybe even not leave our neighborhoods or houses for a while. But it’s better than months of sickness all around us, our doctors and hospitals overwhelmed with more patients than they can care for, and our parents and grandparents denied intensive care because there isn’t enough, and dying before they have to. “Well, they blew up the chicken man in Philly last night…..”

We were pleased to launch two Jersey-centric projects this month. First, we are working with JBS International, the OMNI Institute, and NJAMHAA to help build organizational capacity for Motivational Interviewing and Cognitive Behavioral Therapy. We met the full project team in Trenton to discuss project goals and provide an overview of readiness. Many members of our team have a background in the behavioral health and substance abuse treatment field, so it is great to be working back in that content area. The idea of motivation x capacity derives from the concept of being willing and able and our experiences in direct clinical services. History circles back around!

2019 was a busy year for us and we have our annual report to show for it! The Wandersman Center applied the R=MC2 heuristic in over 16 projects with partners across the country. In this report, we share our accomplishments and advancements in how readiness can be used to promote change for social good. Some of our more notable advancements include modifications to our assessment phase, a new process to improve CMOR and how we build readiness, and our recently released Prevention Readiness Guide. While we learned a lot this year and are proud the work we’ve done, we recognize that we still have a big to-do list, and the report outlines some of these priority areas for 2020 (including the development of our interactive web platform for readiness)! We’re excited for what’s to come in 2020 and eager to share all of it with you!  By the time I hit publish on this post, it may already be 2020 where you live. HAPPY NEW YEAR to our friends and colleagues in Australia, Japan, India, and anywhere east of Riyadh and Istanbul. For the rest of our US-based team, we still have a few hours to finish up our task lists, our cleaning, and our blog posts before heading out for the night [actually staying in and eating Doritos with 5-year-olds]. We here are the Wandersman Center managed to cram a bunch into the final month of the decade. First, we finally put out our Readiness Building Guide. This effort was the culmination of a massive amount of thinking and writing over the past year, informed greatly bu our experiences working on sexual assault prevention in the military with colleagues at the RAND Corporation. Read all about it and download it at the following link. I read a lot of books. Here’s my ranking for 2019, from best to worst. There a lot of business books here because a) we have a business, and b) we want to tap into the broader literature to better understand how we can build momentum in organizations. After all, humans have been implementing organization change for at least 12,000 years. Let’s not limit ourselves to the last decade of implementation science literature.

If I had to summarize all these books into one phrase: Be open and honest about your work, or, as the Bard says: This above all: to thine own self be true  The New York Times recently published an article about inequality. Recent evidence suggests that the differences in income do not match measures of differences in actual skills, intelligence, personality traits. In other words, most low-wage workers are underpaid, and many of the highest-paid professionals are overpaid according to these metrics. “The average African-American adult with a graduate degree demonstrates the same level of cognitive ability as the average person in the top 1 percent of income. Yet 99 percent of African-Americans with graduate degrees do not have incomes high enough to be in the top 1 percent.” The authors identified several factors contributing to this inequality, including well-connected interest groups manipulating markets for their constituencies’ benefit, professional organizations blocking access to jobs by requiring credentials to perform specific tasks, and zoning boards blocking access to housing markets perpetuating social segregation.

Abe in repose following the annual AEA dinner. Abe in repose following the annual AEA dinner. The year was 1999. Cher’s Believe and TLC’s No Scrubs were burning up the charts. It was the best movie year ever. Gas cost $1.17 a gallon. And, an intrepid group of young evaluators came up with Getting to Outcomes.  As long as I’ve known about evaluation, I’ve known about David Fetterman. Together with my colleague, Abe Wanderman, they developed the field of empowerment evaluation in the mid to late 1990s. However, David also draws from an anthropological background when he approaches research and evaluation. Recently, he published the 4th edition of his Ethnography text. Knowing nothing about this area of his work (nor having read previous editions), I dove in. David took the cover photo himself on his way to base camp!This isn’t a book review. I don’t have any referent point with which to judge the quality of the except that I know David generally does quality work Instead, I’d like to zero in on two methodological topics that stuck out: Unobtrusive Measures and the Analysis of Qualitative Data. This first article in a two-article series deals with the unobtrusive measures.  Our team recently got back from the annual American Evaluation Association's conference in Minneapolis, MN. AEA is the premier conference for evaluators and methodologists who work in the social and governmental sectors to share their recent innovations, results, and lessons learned. Like all great conference, the action happens both inside and outside of the sessions. We have many more comment and reflections, but we first wanted to share some work that we presented on the SCALE initiative.

An organization has a thing they are trying to do. They then need to train up and support the people within that organization to do that thing. In my case, the thing was coach a bunch of five and six-year-olds. And, like organizations, my readiness to coach varied throughout the season. And, like well-functioning organizations, I was able to use data to help monitor performance and improve.

|

Categories

All

Archives

September 2023

|

RSS Feed

RSS Feed